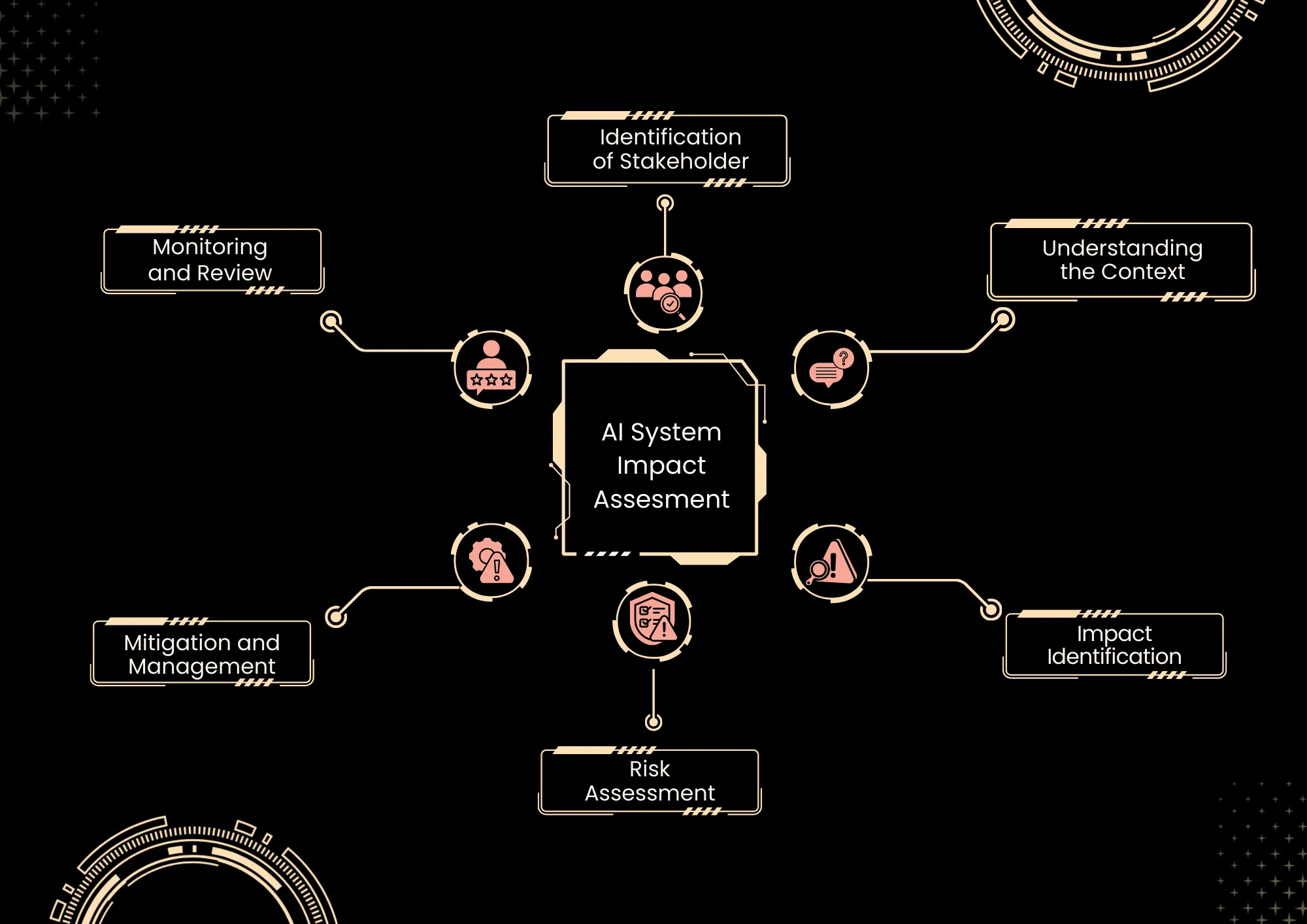

An AI system impact assessment constitutes a structured evaluation of the potential effects- both positive and negative- of an AI on individuals, society, and the environment. Its purpose is to ensure responsible, equitable, and compliant deployment by examining technical, ethical, legal, and societal factors. This process typically involves defining the system, mapping data, identifying risks such as bias, privacy concerns, and misuse, assessing impacts, developing mitigation strategies, and implementing continuous monitoring to uphold governance and foster trust.

The AI system impact assessment is a formalised method for determining how an AI solution may influence people, organisations, and society, and for responsibly managing those effects throughout the AI lifecycle. It underpins sound governance by translating abstract AI risks into concrete, documented impacts and mitigations that are understandable to boards, regulators, and customers.

These assessments have evolved beyond traditional impact analyses, including privacy, environmental, and safety assessments. The evolution has been driven by increasing concern over opaque and high‑risk AI applications in critical domains. As regulations and standards, such as ISO/IEC 42001 and ISO/IEC 42005, are developed, organisations are increasingly expected to demonstrate systematic evaluation and management of AI risks and impacts.

Modern AI deployments involve sensitive data, automated decision-making, and implications for employment, safety, and human rights. This makes ad hoc risk assessments inadequate. Impact assessment provides a systematic, repeatable framework for understanding not only technical failure modes but also the ethical, legal, and societal consequences before and after deployment.

Why AI System Assessments / Benefits

- Adherence to Global Standards

- By integrating clause 6.1.4 of ISO 42001, your AI management system aligns with internationally recognised best practices in the oversight of artificial intelligence.

- Enhanced Risk Assessment

- The assessment emphasises the identification and evaluation of risks associated with artificial intelligence. Implementing it enables you to systematically identify potential threats and develop mitigation strategies, thereby reducing their impact on your organisation.

- Refined Decision-Making Frameworks

- The assessment emphasises the need to consider ethical dimensions and societal implications when making decisions about artificial intelligence. Incorporating this provision can help ensure your decision-making is well-informed and ethically grounded.

- Building Stakeholder Trust

- By adhering to the guidelines in clause 6.1.4, you demonstrate your commitment to responsible and ethical AI practices, which can bolster trust among stakeholders such as customers, employees, and investors.

- Compliance With Legal Regulations

- Including an AI System Impact Assessment facilitates compliance with applicable laws, regulations, and guidelines governing artificial intelligence operations, including principles such as fairness, transparency, and accountability mandated by data protection laws and AI ethics frameworks.

Cyberverse Approach

Our approach to AI system impact assessment follows a structured, risk-based methodology aligned with ISO/IEC 42001 and Australian voluntary AI safety standards, tailored for cybersecurity and compliance-focused organisations. It integrates proven risk techniques, such as Business Impact Analysis (BIA) and bow-tie analysis, to quantify AI-specific impacts on operations, privacy, fairness, and security.

Assessments emphasise stakeholder engagement, continuous monitoring, and documentation to build trust and demonstrate due diligence to regulators such as APRA and the OAIC. We prioritise high-risk AI use cases involving sensitive data or automated decision-making, ensuring that mitigations address the technical, ethical, and societal dimensions.

The process remains iterative, with reassessments triggered by model changes, new regulations, or incidents, embedding AI governance into your broader cyber risk framework.

- Define and Scope

- Map Data Flows

- Identify and Analyse Risks

- Evaluate and Mitigate

- Review Legal and Ethical Compliance

- Document Findings

- Monitor & Review