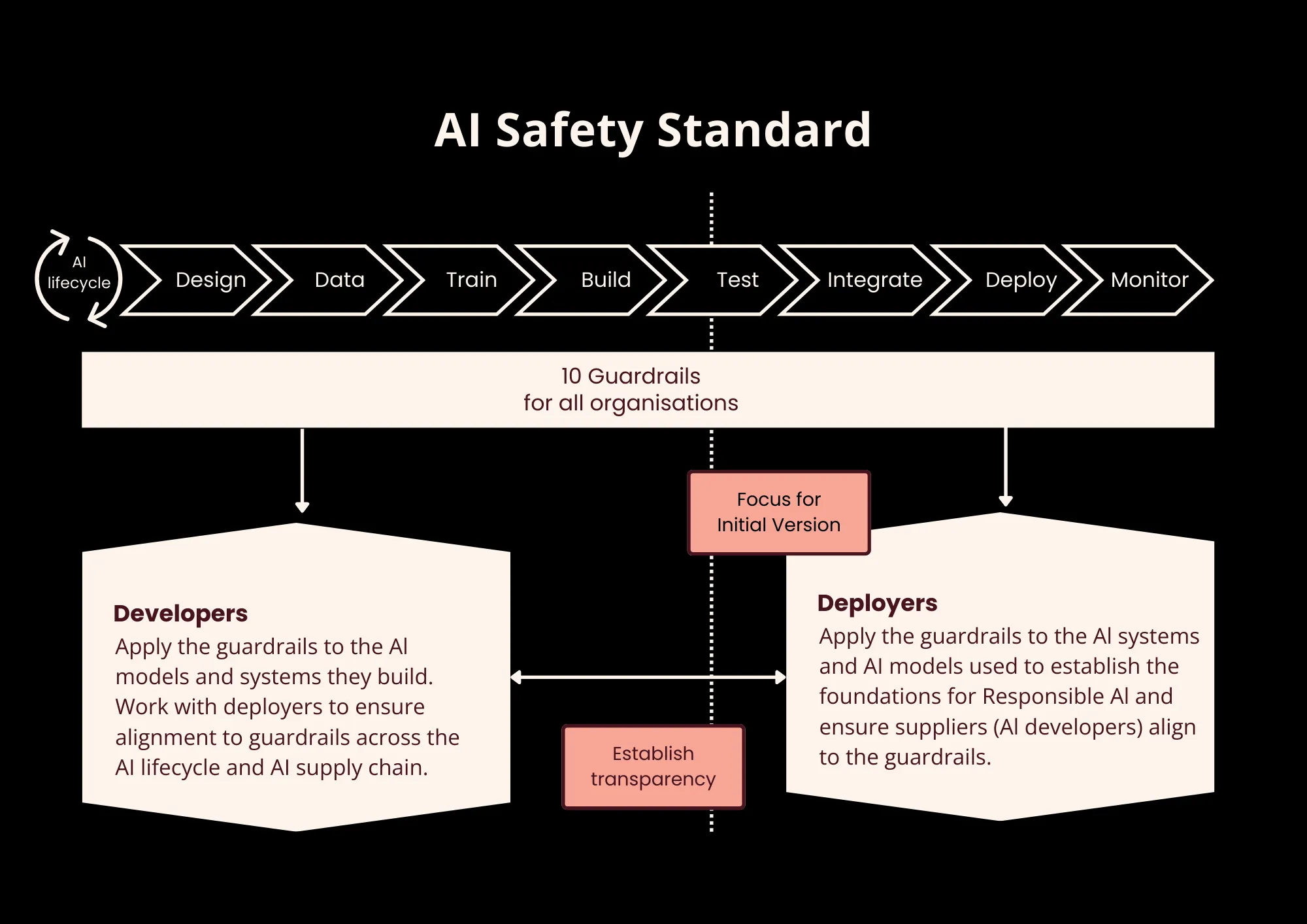

The Voluntary AI Safety Standard aims to help organizations build a foundation for responsible AI by focusing on risk management, safety testing, transparency, accountability, and security. The guardrails are aligned with other responsible AI standards, such as the NIST AI RMF, ISO 42001, and the EU AI Act, which means adopting the Standard soon will help organizations meet future regulatory obligations.

Although the Standard is currently voluntary, organizations are encouraged to implement all ten guardrails.

The Standard is meant to be used by any organization that wishes to build a foundation for responsible and secure AI usage.

The Standard consists of ten guardrails, which are organized into multiple actionable controls that give more concrete guidance on how to best implement that guardrail. Each guardrail is broken into individual actionable steps, providing more concrete guidance to help organizations adopt the Standard.

Some requirements apply to specific AI systems, and some apply to the organization.

The ten guardrails include:

- Establishing a governance program with appropriate accountability

- Establishing an AI risk management program

- Apply security and data governance metrics

- Test AI thoroughly before deployment and monitor after

- Enable appropriate human oversight throughout the AI lifecycle

- Provide end-users with transparency around the use of AI

- Provide end-users with a way to challenge the usage or outcomes of AI

- Disclose information to other actors in the AI supply chain

- Maintain records that allow third parties to assess compliance with the Standard

- Solicit stakeholder feedback

Notably, the Voluntary AI Safety Standard complements the government’s broader Safe and Responsible AI agenda, which includes the development of mandatory guardrails for high-risk AI settings. These mandatory guardrails are closely aligned with the voluntary measures in the Standard. The alignment signals the government’s intent to transition these voluntary guidelines into enforceable regulations, encouraging organisations to proactively adopt these practices now to ease future compliance.

By adopting the Voluntary AI Safety Standard, organisations can ensure individuals and businesses safely leverage AI to its full potential while minimizing risks to data security and human rights.

Why AI Safety Standards / Benefits

- Risk Mitigation and Safety

- The guardrails provide a framework to identify and manage potential AI-related harms to people and communities.

- Trust and Reputation

- Implementing these standards builds confidence among users and stakeholders, demonstrating a commitment to safe, ethical, and responsible AI.

- Future-Proofing Compliance

- The voluntary standard acts as a precursor to upcoming mandatory AI legislation in Australia, allowing organizations to prepare early.

- International Alignment

- The standards align with international frameworks like ISO/IEC 42001 and the NIST AI RMF, facilitating smoother operations in global markets.

- Operational Guidance

- It provides a clear, practical, and auditable checklist for AI governance, from testing and documentation to human oversight and stakeholder engagement.

Cyberverse Approach

Cyberverse helps organisations shifting from ad-hoc usage to structured, risk-based governance frameworks. The approach emphasizes embedding safety throughout the entire AI lifecycle, from initial design to decommissioning.

Here are ways Cyberverse help organisations to operationalize Australia’s Voluntary AI Safety Standard:

- Establish Accountability: Appoint an AI lead in charge of strategy, oversight, and ensuring AI is safely utilized throughout the organisation.

- Develop an AI Strategy: Establish a structured approach to implementing AI that includes objectives, risk control, and moral standards.

- Implement Risk Management Processes: Regularly assess AI risks and impacts and conduct risk assessments to mitigate any possible negative effects.

- Set Up Data Governance and Cybersecurity: Develop robust cybersecurity, privacy, and data handling procedures adapted to AI's unique needs, such as data provenance and quality.

- Thorough Testing and Monitoring: Before deploying AI systems, test them and monitor them to identify any unexpected impacts or changes in behaviour.

- Enable Human Oversight: Establish mechanisms that enable human intervention to handle unforeseen issues at any stage of the AI lifecycle.

- Ensure Transparency and Disclosure: Clearly communicate AI use, roles, and when content is AI-generated, building trust with users and stakeholders.

- Provide Challenge and Appeal Mechanisms: Establish channels for impacted stakeholders to challenge AI judgments or results.

- Share Information Across the AI Supply Chain: Collaborate with supply chain partners to understand and manage AI components, data sources, and AI risks.

- Document Compliance Efforts: Maintain records, including an AI inventory and documentation, to demonstrate compliance with the standard and other AI laws.

- Engage with Stakeholders: Regularly consult stakeholders to identify potential risks, address bias, and ensure AI accessibility, transparency and ethical fairness.